Scraping addresses from the internet can be done for many reasons.

After all, this kind of data can be pretty valuable.

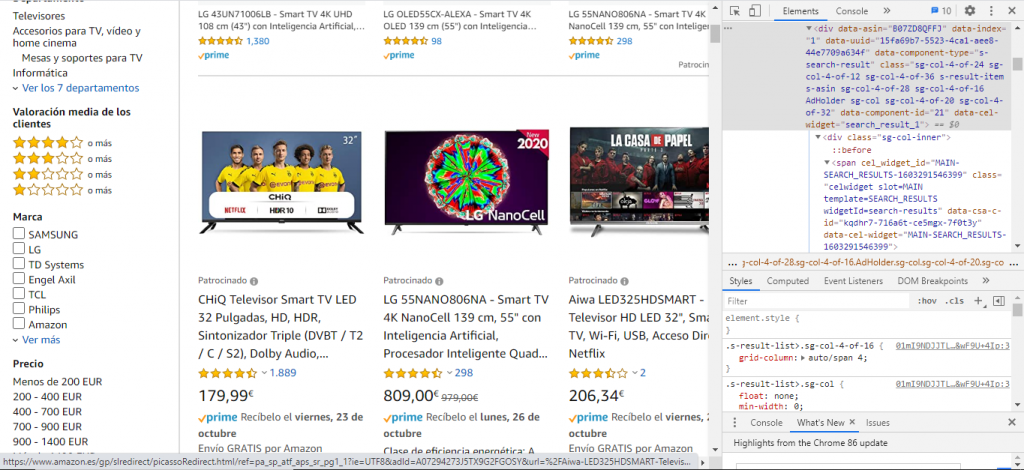

Learn web scraping with C# with this step-by-step tutorial covering the must-known C# web-scraping tools and libraries. Jennifer Marsh 05 October, 2020 12 min read Jennifer Marsh is a software developer and technology writer for a number of publications across several industries including cybersecurity, programming, DevOps, and IT operations. Who is this for: Scraper API is a tool for developers building web scrapers, it handles.

The problem is how tedious the data collection process can be. Especially if you want to extract data to an excel sheet or JSON file.

Here’s where Web Scraping can help.

- Who is this for: Data analysts, Marketers, and researchers who lack programming skills. Why you should use it: Web Scraper is a chrome browser extension built for scraping data from websites. It’s a free web scraping tool for scraping dynamic web pages. Who is this for: Enterprises, marketers, and researchers.

- Firstpage url) (latestpagenumber list of all relevant URLs. Listofpages url, '?page=', 1:latestpagenumber) You can manually check that the entries to listofpages are indeed valid and can be called via a web browser.

- Sep 14, 2020 Web Scraping projects can get quite complex. For example, you might be trying to extract data from multiple different URLs from the same website. Today, we will go over how to set up a web scraper to extract data from multiple different URLs. A Free and Powerful Web Scraper. First up, you will need the right web scraper to tackle this task.

Free and Easy Web Scraping

A Web Scraper will allow you to load up any website, select the data you want to extract and automatically extract this data in a new format.

ParseHub is a powerful and free web scraper that works with any website. With ParseHub, we can load up a website such as Yelp and scrape as many addresses as we’d want.

This process requires us to select the data we want and let ParseHub run the scrape for us. Quick and easy.

Scraping Addresses from Yelp

Before we get started, make sure to download and install ParseHub.

- Start by booting up ParseHub, click on New Project and enter the URL you want to scrape. In this case, we will scrape the Yelp search results page for Coffee Shops in Toronto. Once you submit the URL, it will be rendered inside the app.

- Start by clicking on the name of the first business on the page. It will be highlighted in Green to indicate that it has been selected. The rest of the names on the page will be highlighted in Yellow. In the left sidebar, rename your selection to business.

- Now click on the second business name on the list to select them all.

- Use the PLUS(+) sign next to your business selection and choose the Relative Select command.

- Using the Relative Select command, click on the name of the first business on the list and then on the business address. An arrow will appear to show the association you’re creating. Rename your new selection to address.

- You can repeat the previous step to also scrape other data such as phone number, category and more. In this case, we will keep it to just the address.

Adding Pagination

ParseHub is currently only pulling data from the first page of listings. Next up, well set it up to scrape additional pages of data.

- Click on the PLUS(+) sign next to your page selection and choose the select command.

- Scroll all the way to the bottom of the page and click on the “next page” link at the bottom of the page. Rename your selection to next.

- Use the icon beside your next selection to expand it.

- Then, delete both extract commands under your selection.

- Use the PLUS(+) sign next to your next selection and choose the Click command. A pop-up will appear asking you if this a next page button. Click on “Yes” and enter the number of times you’d like to repeat this process. In this case, we will run it 5 more times.

Running Your Scrape

Now it’s time to run your new scraping project.

To do this, click on the green “Get Data” button on the left sidebar. Here you can test, schedule, or run your scraping project.

In this case, we will run it right away. ParseHub will now go and scrape all the data you’ve selected on the cloud. You will receive a notification once your scrape is complete.

Closing Thoughts

After your scrape is complete, you will be able to download your data as an Excel sheet or JSON file.

You can now easily use this data for whichever purpose you might have.If you want to learn more, read our guide on how to extract more data from Yelp or from YellowPages.

Web scraping, web crawling, html scraping, and any other form of web data extraction can be complicated. Between obtaining the correct page source, to parsing the source correctly, rendering javascript, and obtaining data in a usable form, there’s a lot of work to be done. Different users have very different needs, and there are tools out there for all of them, people who want to build web scrapers without coding, developers who want to build web crawlers to crawl large sites, and everything in between. Here is our list of the 10 best web scraping tools on the market right now, from open source projects to hosted SAAS solutions to desktop software, there is sure to be something for everyone looking to make use of web data!

1. Scraper API

Website: https://www.scraperapi.com/

Who is this for: Scraper API is a tool for developers building web scrapers, it handles proxies, browsers, and CAPTCHAs so developers can get the raw HTML from any website with a simple API call.

Why you should use it: Scraper API is a tool for developers building web scrapers, it handles proxies, browsers, and CAPTCHAs so developers can get the raw HTML from any website with a simple API call. It doesn’t burden you with managing your own proxies, it manages its own internal pool of over a hundreds of thousands of proxies from a dozen different proxy providers, and has smart routing logic that routes requests through different subnets and automatically throttles requests in order to avoid IP bans and CAPTCHAs. It’s the ultimate web scraping service for developers, with special pools of proxies for ecommerce price scraping, search engine scraping, social media scraping, sneaker scraping, ticket scraping and more! If you need to scrape millions of pages a month, you can use this form to ask for a volume discount.

2. ScrapeSimple

Website: https://www.scrapesimple.com

Who is this for: ScrapeSimple is the perfect service for people who want a custom scraper built for them. Web scraping is made as simple as filling out a form with instructions for what kind of data you want.

Why you should use it: ScrapeSimple lives up to its name with a fully managed service that builds and maintains custom web scrapers for customers. Just tell them what information you need from which sites, and they will design a custom web scraper to deliver the information to you periodically (could be daily, weekly, monthly, or whatever) in CSV format directly to your inbox. This service is perfect for businesses that just want a html scraper without needing to write any code themselves. Response times are quick and the service is incredibly friendly and helpful, making this service perfect for people who just want the full data extraction process taken care of for them.

3. Octoparse

Website: https://www.octoparse.com/

Who is this for: Octoparse is a fantastic tool for people who want to extract data from websites without having to code, while still having control over the full process with their easy to use user interface.

Why you should use it: Octoparse is the perfect tool for people who want to scrape websites without learning to code. It features a point and click screen scraper, allowing users to scrape behind login forms, fill in forms, input search terms, scroll through infinite scroll, render javascript, and more. It also includes a site parser and a hosted solution for users who want to run their scrapers in the cloud. Best of all, it comes with a generous free tier allowing users to build up to 10 crawlers for free. For enterprise level customers, they also offer fully customized crawlers and managed solutions where they take care of running everything for you and just deliver the data to you directly.

4. ParseHub

Website: https://www.parsehub.com/

Who is this for: Parsehub is an incredibly powerful tool for building web scrapers without coding. It is used by analysts, journalists, data scientists, and everyone in between.

Why you should use it: Parsehub is dead simple to use, you can build web scrapers simply by clicking on the data that you want. It then exports the data in JSON or Excel format. It has many handy features such as automatic IP rotation, allowing scraping behind login walls, going through dropdowns and tabs, getting data from tables and maps, and much much more. In addition, it has a generous free tier, allowing users to scrape up to 200 pages of data in just 40 minutes! Parsehub is also nice in that it provies desktop clients for Windows, Mac OS, and Linux, so you can use them from your computer no matter what system you’re running.

5. Scrapy

Website: https://scrapy.org

Who is this for: Scrapy is a web scraping library for Python developers looking to build scalable web crawlers. It’s a full on web crawling framework that handles all of the plumbing (queueing requests, proxy middleware, etc.) that makes building web crawlers difficult.

Facebook Scraper Url

Why you should use it: As an open source tool, Scrapy is completely free. It is battle tested, and has been one of the most popular Python libraries for years, and it’s probably the best python web scraping tool for new applications. It is well documented and there are many tutorials on how to get started. In addition, deploying the crawlers is very simple and reliable, the processes can run themselves once they are set up. As a fully featured web scraping framework, there are many middleware modules available to integrate various tools and handle various use cases (handling cookies, user agents, etc.).

6. Diffbot

Website: https://www.diffbot.com

Who is this for: Enterprises who who have specific data crawling and screen scraping needs, particularly those who scrape websites that often change their HTML structure.

Why you should use it: Diffbot is different from most page scraping tools out there in that it uses computer vision (instead of html parsing) to identify relevant information on a page. This means that even if the HTML structure of a page changes, your web scrapers will not break as long as the page looks the same visually. This is an incredible feature for long running mission critical web scraping jobs. While they may be a bit pricy (the cheapest plan is $299/month), they do a great job offering a premium service that may make it worth it for large customers.

7. Cheerio

Website: https://cheerio.js.org

Who is this for: NodeJS developers who want a straightforward way to parse HTML. Those familiar with jQuery will immediately appreciate the best javascript web scraping syntax available.

Why you should use it: Cheerio offers an API similar to jQuery, so developers familiar with jQuery will immediately feel at home using Cheerio to parse HTML. It is blazing fast, and offers many helpful methods to extract text, html, classes, ids, and more. It is by far the most popular HTML parsing library written in NodeJS, and is probably the best NodeJS web scraping tool or javascript web scraping tool for new projects.

8. BeautifulSoup

Website: https://www.crummy.com/software/BeautifulSoup/

Who is this for: Python developers who just want an easy interface to parse HTML, and don’t necessarily need the power and complexity that comes with Scrapy.

Why you should use it: Like Cheerio for NodeJS developers, Beautiful Soup is by far the most popular HTML parser for Python developers. It’s been around for over a decade now and is extremely well documented, with many web parsing tutorials teaching developers to use it to scrape various websites in both Python 2 and Python 3. If you are looking for a Python HTML parsing library, this is the one you want.

9. Puppeteer

Website: https://github.com/GoogleChrome/puppeteer

Who is this for: Puppeteer is a headless Chrome API for NodeJS developers who want very granular control over their scraping activity.

Why you should use it: As an open source tool, Puppeteer is completely free. It is well supported and actively being developed and backed by the Google Chrome team itself. It is quickly replacing Selenium and PhantomJS as the default headless browser automation tool. It has a well thought out API, and automatically installs a compatible Chromium binary as part of its setup process, meaning you don’t have to keep track of browser versions yourself. While it’s much more than just a web crawling library, it’s often used to scrape website data from sites that require javascript to display information, it handles scripts, stylesheets, and fonts just like a real browser. Note that while it is a great solution for sites that require javascript to display data, it is very CPU and memory intensive, so using it for sites where a full blown browser is not necessary is probably not a great idea. Most times a simple GET request should do the trick!

10. Mozenda

Online Web Scraper Free

Website: https://www.mozenda.com/

Who is this for: Enterprises looking for a cloud based self serve webpage scraping platform need look no further. With over 7 billion pages scraped, Mozenda has experience in serving enterprise customers from all around the world.

Why you should use it: Mozenda allows enterprise customers to run web scrapers on their robust cloud platform. They set themselves apart with the customer service (providing both phone and email support to all paying customers). Its platform is highly scalable and will allow for on premise hosting as well. Like Diffbot, they are a bit pricy, and their lowest plans start at $250/month.

Honorable Mention 1. Kimura

Website: https://github.com/vifreefly/kimuraframework

Who is this for: Kimura is an open source web scraping framework written in Ruby, it makes it incredibly easy to get a Ruby web scraper up and running.

Why you should use it: Kimura is quickly becoming known as the best Ruby web scraping library, as it’s designed to work with headless Chrome/Firefox, PhantomJS, and normal GET requests all out of the box. It’s syntax is similar to Scrapy and developers writing Ruby web scrapers will love all of the nice configuration options to do things like set a delay, rotate user agents, and set default headers.

Honorable Mention 2. Goutte

Website: https://github.com/FriendsOfPHP/Goutte

Who is this for: Goutte is an open source web crawling framework written in PHP, it makes it super easy extract data from the HTML/XML responses using PHP.

Why you should use it: Goutte is a very straight forward, no frills framework that is considered by many to be the best PHP web scraping library, as it’s designed for simplicity, handling the vast majority of HTML/XML use cases without too much additional cruft. It also seamlessly integrates with the excellent Guzzle requests library, which allows you to customize the framework for more advanced use cases.

The open web is by far the greatest global repository for human knowledge, there is almost no information that you can’t find through extracting web data. Because web scraping is done by many people of various levels of technical ability and know how, there are many tools available that service everyone from people who don’t want to write any code to seasoned developers just looking for the best open source solution in their language of choice.

Web Scraper Url List 2017

Hopefully, this list of tools has been helpful in letting you take advantage of this information for your own projects and businesses. If you have any web scraping jobs you would like to discuss with us, please contact us here. Happy scraping!